Grid5000:Home

|

Grid'5000 is a precursor infrastructure of SLICES-RI, Scientific Large Scale Infrastructure for Computing/Communication Experimental Studies.

|

|

Grid'5000 is a large-scale and flexible testbed for experiment-driven research in all areas of computer science, with a focus on parallel and distributed computing, including Cloud, HPC, Big Data and AI. Key features:

Older documents:

|

Random pick of publications

Five random publications that benefited from Grid'5000 (at least 2949 overall):

- Matthieu Simonin, Anne-Cécile Orgerie. Méthodologies de calcul d'empreinte carbone sur une plateforme de calcul : exemple du site Grid'5000 de Rennes. JRES 2024 - Journées réseaux de l'enseignement et de la recherche, Renater, Dec 2024, Rennes, France. pp.1-14. hal-04893984 view on HAL pdf

- Pierre Epron, Gaël Guibon, Miguel Couceiro. ORPAILLEUR SyNaLP at CLEF 2024 Task 2: Good Old Cross Validation for Large Language Models Yields the Best Humorous Detection. Working Notes of the Conference and Labs of the Evaluation Forum (CLEF 2024), Sep 2024, Grenoble, France. pp.1841-1856. hal-04696012 view on HAL pdf

- Théophile Bastian. Towards automatic characterization of microarchitectural behaviour form performance modeling of computing kernels : an analysis of the Cortex A72 and Intel microarchitectures. Hardware Architecture cs.AR. Université Grenoble Alpes 2020-.., 2024. English. NNT : 2024GRALM072. tel-05116111 view on HAL pdf

- Sewade Ogun, Abraham T. Owodunni, Tobi Olatunji, Eniola Alese, Babatunde Oladimeji, et al.. 1000 African Voices: Advancing inclusive multi-speaker multi-accent speech synthesis. Interspeech 2024, Sep 2024, Kos Island, Greece. hal-04663033 view on HAL pdf

- Chih-Kai Huang, Guillaume Pierre. Aggregate Monitoring for Geo-Distributed Kubernetes Cluster Federations. IEEE Transactions on Cloud Computing, 2024, 12 (4), pp.1449-1462. 10.1109/TCC.2024.3482574. hal-04736577 view on HAL pdf

Latest news

Failed to load RSS feed from https://www.grid5000.fr/rss/G5KNews.php: There was a problem during the HTTP request: 500 Internal Server Error

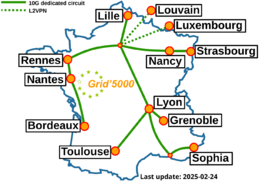

Grid'5000 sites

Current funding

INRIA |

CNRS |

UniversitiesIMT Atlantique |

Regional councilsAquitaine |