Grid5000:Home: Difference between revisions

No edit summary |

No edit summary |

||

| (One intermediate revision by the same user not shown) | |||

| Line 3: | Line 3: | ||

|- valign="top" | |- valign="top" | ||

|bgcolor="#888888" style="border:1px solid #cccccc;padding:2em;padding-top:1em;"| | |bgcolor="#888888" style="border:1px solid #cccccc;padding:2em;padding-top:1em;"| | ||

[[File:Slices-ri-white-color.png|260px|left]] | [[File:Slices-ri-white-color.png|260px|left|link=https://www.slices-ri.eu]] | ||

<b>Grid'5000 is a precursor infrastructure of [ | <b>Grid'5000 is a precursor infrastructure of [https://www.slices-ri.eu SLICES-RI], Scientific Large Scale Infrastructure for Computing/Communication Experimental Studies.</b> | ||

<br/> | <br/> | ||

Content on this website is partly outdated. Technical information remains relevant. | Content on this website is partly outdated. Technical information remains relevant. | ||

| Line 12: | Line 12: | ||

|- valign="top" | |- valign="top" | ||

|bgcolor="#f5fff5" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | |bgcolor="#f5fff5" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | ||

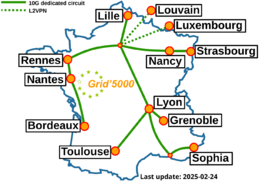

[[Image:g5k-backbone.png|thumbnail|260px|right|Grid'5000]] | [[Image:g5k-backbone.png|thumbnail|260px|right|Grid'5000|link=https://www.grid5000.fr]] | ||

'''Grid'5000 is a large-scale and flexible testbed for experiment-driven research in all areas of computer science, with a focus on parallel and distributed computing, including Cloud, HPC, Big Data and AI.''' | '''Grid'5000 is a large-scale and flexible testbed for experiment-driven research in all areas of computer science, with a focus on parallel and distributed computing, including Cloud, HPC, Big Data and AI.''' | ||

| Line 75: | Line 75: | ||

| width="50%" bgcolor="#f5f5f5" valign="top" align="center" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | | width="50%" bgcolor="#f5f5f5" valign="top" align="center" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | ||

===INRIA=== | ===INRIA=== | ||

[[Image:Logo_INRIA.gif|300px]] | [[Image:Logo_INRIA.gif|300px|link=https://www.inria.fr]] | ||

| width="50%" bgcolor="#f5f5f5" valign="top" align="center" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | | width="50%" bgcolor="#f5f5f5" valign="top" align="center" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | ||

===CNRS=== | ===CNRS=== | ||

[[Image:CNRS-filaire-Quadri.png|125px]] | [[Image:CNRS-filaire-Quadri.png|125px|link=https://www.cnrs.fr]] | ||

|- | |- | ||

| width="50%" bgcolor="#f5f5f5" valign="top" align="center" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | | width="50%" bgcolor="#f5f5f5" valign="top" align="center" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | ||

Latest revision as of 11:02, 11 July 2025

|

Grid'5000 is a precursor infrastructure of SLICES-RI, Scientific Large Scale Infrastructure for Computing/Communication Experimental Studies.

|

|

Grid'5000 is a large-scale and flexible testbed for experiment-driven research in all areas of computer science, with a focus on parallel and distributed computing, including Cloud, HPC, Big Data and AI. Key features:

Older documents:

|

Random pick of publications

Five random publications that benefited from Grid'5000 (at least 2943 overall):

- Youenn Merel Jourdan, Mathieu Acher, Camille Maumet. In the Search for Truth: Navigating Variability in Neuroimaging Software Pipelines. SPLC 2025 - 29th ACM International Systems and Software Product Line Conference, Association for Computing Machinery (ACM), Sep 2025, Coruna, Spain, Spain. pp.129-135, 10.1145/3744915.3748470. hal-05158426 view on HAL pdf

- Khaled Arsalane, Guillaume Pierre, Shadi Ibrahim. Toward Stream Processing Elasticity in Realistic Geo-Distributed Environments. IC2E 2024 - 12th IEEE International Conference on Cloud Engineering, IEEE, Sep 2024, Paphos, Cyprus. pp.1-9. hal-04655408v2 view on HAL pdf

- Hugo Thomas, Guillaume Gravier, Pascale Sébillot. Recherche de relation à partir d’un seul exemple fondée sur un modèle N-way K-shot : une histoire de distracteurs. 35èmes Journées d'Études sur la Parole (JEP 2024) 31ème Conférence sur le Traitement Automatique des Langues Naturelles (TALN 2024) 26ème Rencontre des Étudiants Chercheurs en Informatique pour le Traitement Automatique des Langues (RECITAL 2024), Jul 2024, Toulouse, France. pp.157-168. hal-04623015 view on HAL pdf

- Vania Marangozova, Angelo Gennuso. K8S Auto-Scaler Coordinators. Université Grenoble - Alpes. 2024. hal-04963348 view on HAL pdf

- François Lemaire, Louis Roussel. Parameter Estimation using Integral Equations. Maple Transactions, 2024, Proceedings of the Maple Conference 2023, 4 (1), 10.5206/mt.v4i1.17126. hal-04560846v2 view on HAL pdf

Latest news

![]() Cluster Sasquatch is now in default queue at Grenoble

Cluster Sasquatch is now in default queue at Grenoble

We are pleased to announce that the Sasquatch [1] cluster is now available in the default queue.

Sasquatch is a cluster composed of 2 HPE RL300 nodes, each featuring:

This cluster was funded by the PEPR IA.

[1] https://www.grid5000.fr/w/Grenoble:Hardware#sasquatch

[2] https://amperecomputing.com/briefs/ampere-altra-family-product-brief

Best regards, Grid'5000 Technical Team

-- Grid'5000 Team 10:15, 11 February 2026 (CEST)

![]() Cluster Spirou is now in default queue at Louvain

Cluster Spirou is now in default queue at Louvain

We are pleased to announce that the Spirou[1] cluster of the newly installed Louvain site is now available in the default queue.

Spirou is a cluster composed of 8 Lenovo ThinkSystem SR630 V2 nodes, each featuring:

Be aware that we noticed I/Os inconsistencies on this cluster.

We advise users to take this into account when performing experimentations on the cluster. See the following bug for more information: https://intranet.grid5000.fr/bugzilla/show_bug.cgi?id=16938

This cluster was funded by the Fonds de la Recherche Scientifique – FNRS (F.R.S.–FNRS), and its operation is supported by F.R.S.–FNRS and the Wallonia region (SPW).

[1] https://www.grid5000.fr/w/Louvain:Hardware#spirou

Best regards,

Grid'5000 Technical Team

-- Grid'5000 Team 10:24, 12 January 2026 (CEST)

![]() End of support for centOS7/8 and centOSStream8 environments

End of support for centOS7/8 and centOSStream8 environments

Support for the centOS7/8 and centOSStream8 kadeploy environments is stopped due to the end of upstream support and compatibility issues with recent hardware.

The last version of the centOS7 environments (version 2024071117), centOS8 environments (version 2024071119), centOSStream8 environments (version 2024070316) will remain available on /grid5000. Older versions can still be accessed in the archive directory (see /grid5000/README.unmaintained-envs for more information).

-- Grid'5000 Team 08:44, 4 December 2025 (CEST)

![]() Ecotaxe cluster is now in default queue at Nantes

Ecotaxe cluster is now in default queue at Nantes

We are pleased to announce that the ecotaxe cluster of Nantes is now available in the default queue.

As a reminder, ecotaxe is a cluster composed of 2 HPE ProLiant DL385 Gen10 Plus v2 servers[1].

Each node features:

To submit a job on this cluster, the following command may be used:

oarsub -t exotic -p ecotaxe

This cluster is co-funded by Région Pays de la Loire, FEDER and REACT EU via the CPER SAMURAI [3].

[1] https://www.grid5000.fr/w/Nantes:Hardware#ecotaxe

[2] The observed throughput depends on multiple parameters such as the workload, the number of streams, ... [3] https://www.imt-atlantique.fr/fr/recherche-innovation/collaborer/projet/samurai

-- Grid'5000 Team 14:10, 02 December 2025 (CET)

Grid'5000 sites

Current funding

INRIA |

CNRS |

UniversitiesIMT Atlantique |

Regional councilsAquitaine |