Grid5000:Home: Difference between revisions

No edit summary |

No edit summary |

||

| (9 intermediate revisions by 5 users not shown) | |||

| Line 1: | Line 1: | ||

__NOTOC__ __NOEDITSECTION__ | __NOTOC__ __NOEDITSECTION__ | ||

{|width="95%" | |||

|- valign="top" | |||

|bgcolor="#888888" style="border:1px solid #cccccc;padding:2em;padding-top:1em;"| | |||

[[File:Slices-ri-white-color.png|260px|left|link=https://www.slices-ri.eu]] | |||

<b>Grid'5000 is a precursor infrastructure of [https://www.slices-ri.eu SLICES-RI], Scientific Large Scale Infrastructure for Computing/Communication Experimental Studies.</b> | |||

<br/> | |||

Content on this website is partly outdated. Technical information remains relevant. | |||

|} | |||

{|width="95%" | {|width="95%" | ||

|- valign="top" | |- valign="top" | ||

|bgcolor="#f5fff5" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | |bgcolor="#f5fff5" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | ||

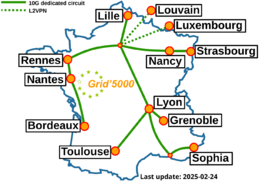

[[Image: | [[Image:g5k-backbone.png|thumbnail|260px|right|Grid'5000|link=https://www.grid5000.fr]] | ||

'''Grid'5000 is a large-scale and flexible testbed for experiment-driven research in all areas of computer science, with a focus on parallel and distributed computing including Cloud, HPC | '''Grid'5000 is a large-scale and flexible testbed for experiment-driven research in all areas of computer science, with a focus on parallel and distributed computing, including Cloud, HPC, Big Data and AI.''' | ||

Key features: | Key features: | ||

* provides '''access to a large amount of resources''': | * provides '''access to a large amount of resources''': 15000 cores, 800 compute-nodes grouped in homogeneous clusters, and featuring various technologies: PMEM, GPU, SSD, NVMe, 10G and 25G Ethernet, Infiniband, Omni-Path | ||

* '''highly reconfigurable and controllable''': researchers can experiment with a fully customized software stack thanks to bare-metal deployment features, and can isolate their experiment at the networking layer | * '''highly reconfigurable and controllable''': researchers can experiment with a fully customized software stack thanks to bare-metal deployment features, and can isolate their experiment at the networking layer | ||

* '''advanced monitoring and measurement features for traces collection of networking and power consumption''', providing a deep understanding of experiments | * '''advanced monitoring and measurement features for traces collection of networking and power consumption''', providing a deep understanding of experiments | ||

| Line 15: | Line 24: | ||

<br> | <br> | ||

Read more about our [[Team|teams]], our [[Publications|publications]], and the [[Grid5000:UsagePolicy|usage policy]] of the testbed. Then [[Grid5000:Get_an_account|get an account]], and learn how to use the testbed with our [[Getting_Started|Getting Started tutorial]] and the rest of our [[:Category:Portal:User|Users portal]]. | Read more about our [[Team|teams]], our [[Publications|publications]], and the [[Grid5000:UsagePolicy|usage policy]] of the testbed. Then [[Grid5000:Get_an_account|get an account]], and learn how to use the testbed with our [[Getting_Started|Getting Started tutorial]] and the rest of our [[:Category:Portal:User|Users portal]]. | ||

<br> | <br> | ||

Published documents and presentations: | |||

* [[Media:Grid5000.pdf|Presentation of Grid'5000]] (April 2019) | * [[Media:Grid5000.pdf|Presentation of Grid'5000]] (April 2019) | ||

* [https://www.grid5000.fr/mediawiki/images/Grid5000_science-advisory-board_report_2018.pdf Report from the Grid'5000 Science Advisory Board (2018)] | * [https://www.grid5000.fr/mediawiki/images/Grid5000_science-advisory-board_report_2018.pdf Report from the Grid'5000 Science Advisory Board (2018)] | ||

| Line 39: | Line 46: | ||

==Latest news== | ==Latest news== | ||

<rss max=4 item-max-length="2000">https://www.grid5000.fr/ | <rss max=4 item-max-length="2000">https://www.grid5000.fr/rss/G5KNews.php</rss> | ||

---- | ---- | ||

[[News|Read more news]] | [[News|Read more news]] | ||

| Line 50: | Line 57: | ||

* [[Lille:Home|Lille]] | * [[Lille:Home|Lille]] | ||

* [[Luxembourg:Home|Luxembourg]] | * [[Luxembourg:Home|Luxembourg]] | ||

* [[Louvain:Home|Louvain]] | |||

|width="33%" bgcolor="#f5f5f5" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | |width="33%" bgcolor="#f5f5f5" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | ||

* [[Lyon:Home|Lyon]] | * [[Lyon:Home|Lyon]] | ||

* [[Nancy:Home|Nancy]] | * [[Nancy:Home|Nancy]] | ||

* [[Nantes:Home|Nantes]] | * [[Nantes:Home|Nantes]] | ||

* [[Rennes:Home|Rennes]] | |||

|width="33%" bgcolor="#f5f5f5" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | |width="33%" bgcolor="#f5f5f5" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | ||

* [[Sophia:Home|Sophia-Antipolis]] | * [[Sophia:Home|Sophia-Antipolis]] | ||

* [[Strasbourg:Home|Strasbourg]] | |||

* [[Toulouse:Home|Toulouse]] | * [[Toulouse:Home|Toulouse]] | ||

|- | |- | ||

| Line 62: | Line 71: | ||

== Current funding == | == Current funding == | ||

{|width="100%" cellspacing="3" | {|width="100%" cellspacing="3" | ||

|- | |- | ||

| width="50%" bgcolor="#f5f5f5" valign="top" align="center" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | | width="50%" bgcolor="#f5f5f5" valign="top" align="center" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | ||

===INRIA=== | ===INRIA=== | ||

[[Image:Logo_INRIA.gif|300px]] | [[Image:Logo_INRIA.gif|300px|link=https://www.inria.fr]] | ||

| width="50%" bgcolor="#f5f5f5" valign="top" align="center" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | | width="50%" bgcolor="#f5f5f5" valign="top" align="center" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | ||

===CNRS=== | ===CNRS=== | ||

[[Image:CNRS-filaire-Quadri.png|125px]] | [[Image:CNRS-filaire-Quadri.png|125px|link=https://www.cnrs.fr]] | ||

|- | |- | ||

| width="50%" bgcolor="#f5f5f5" valign="top" align="center" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | | width="50%" bgcolor="#f5f5f5" valign="top" align="center" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | ||

===Universities=== | ===Universities=== | ||

IMT Atlantique<br/> | |||

Université Grenoble Alpes, Grenoble INP<br/> | Université Grenoble Alpes, Grenoble INP<br/> | ||

Université Rennes 1, Rennes<br/> | Université Rennes 1, Rennes<br/> | ||

Latest revision as of 11:02, 11 July 2025

|

Grid'5000 is a precursor infrastructure of SLICES-RI, Scientific Large Scale Infrastructure for Computing/Communication Experimental Studies.

|

|

Grid'5000 is a large-scale and flexible testbed for experiment-driven research in all areas of computer science, with a focus on parallel and distributed computing, including Cloud, HPC, Big Data and AI. Key features:

Older documents:

|

Random pick of publications

Five random publications that benefited from Grid'5000 (at least 2940 overall):

- Cédric Prigent, Alexandru Costan, Gabriel Antoniu, Loïc Cudennec. Enabling Federated Learning across the Computing Continuum: Systems, Challenges and Future Directions. Future Generation Computer Systems, 2024, 160, pp.767-783. 10.1016/j.future.2024.06.043. hal-04659211 view on HAL pdf

- Sofía Callejas, Hernan Lira, Andrew Berry, Luis Martí, Nayat Sanchez-Pi. No Plankton Left Behind: Preliminary results on massive plankton image recognition. 1th Latin American High Performance Computing Conference - CARLA 2024, Ginés Guerrero; Jaime San Martín; Esteban Meneses; Carlos J Barrios H; Carla Osthoff; Jose M Monsalve Diaz, Sep 2024, Santiago de Chile, Chile. hal-04803602 view on HAL pdf

- Cédric Boscher, Nawel Benarba, Fatima Elhattab, Sara Bouchenak. Personalized Privacy-Preserving Federated Learning. Proceedings of the 25th International Middleware Conference, Dec 2024, Hong Kong, China. pp.454--466, 10.1145/3652892.3700785. hal-04770214 view on HAL pdf

- Jan Aalmoes. Intelligence artificielle pour des services moraux : Concilier équité et confidentialité. Intelligence artificielle cs.AI. INSA de Lyon, 2024. Français. NNT : 2024ISAL0126. tel-05014177 view on HAL pdf

- François Portier, Lionel Truquet, Ikko Yamane. Nearest Neighbor Sampling for Covariate Shift Adaptation. 2024. hal-04645530 view on HAL pdf

Latest news

![]() Cluster Sasquatch is now in default queue at Grenoble

Cluster Sasquatch is now in default queue at Grenoble

We are pleased to announce that the Sasquatch [1] cluster is now available in the default queue.

Sasquatch is a cluster composed of 2 HPE RL300 nodes, each featuring:

This cluster was funded by the PEPR IA.

[1] https://www.grid5000.fr/w/Grenoble:Hardware#sasquatch

[2] https://amperecomputing.com/briefs/ampere-altra-family-product-brief

Best regards, Grid'5000 Technical Team

-- Grid'5000 Team 10:15, 11 February 2026 (CEST)

![]() Cluster Spirou is now in default queue at Louvain

Cluster Spirou is now in default queue at Louvain

We are pleased to announce that the Spirou[1] cluster of the newly installed Louvain site is now available in the default queue.

Spirou is a cluster composed of 8 Lenovo ThinkSystem SR630 V2 nodes, each featuring:

Be aware that we noticed I/Os inconsistencies on this cluster.

We advise users to take this into account when performing experimentations on the cluster. See the following bug for more information: https://intranet.grid5000.fr/bugzilla/show_bug.cgi?id=16938

This cluster was funded by the Fonds de la Recherche Scientifique – FNRS (F.R.S.–FNRS), and its operation is supported by F.R.S.–FNRS and the Wallonia region (SPW).

[1] https://www.grid5000.fr/w/Louvain:Hardware#spirou

Best regards,

Grid'5000 Technical Team

-- Grid'5000 Team 10:24, 12 January 2026 (CEST)

![]() End of support for centOS7/8 and centOSStream8 environments

End of support for centOS7/8 and centOSStream8 environments

Support for the centOS7/8 and centOSStream8 kadeploy environments is stopped due to the end of upstream support and compatibility issues with recent hardware.

The last version of the centOS7 environments (version 2024071117), centOS8 environments (version 2024071119), centOSStream8 environments (version 2024070316) will remain available on /grid5000. Older versions can still be accessed in the archive directory (see /grid5000/README.unmaintained-envs for more information).

-- Grid'5000 Team 08:44, 4 December 2025 (CEST)

![]() Ecotaxe cluster is now in default queue at Nantes

Ecotaxe cluster is now in default queue at Nantes

We are pleased to announce that the ecotaxe cluster of Nantes is now available in the default queue.

As a reminder, ecotaxe is a cluster composed of 2 HPE ProLiant DL385 Gen10 Plus v2 servers[1].

Each node features:

To submit a job on this cluster, the following command may be used:

oarsub -t exotic -p ecotaxe

This cluster is co-funded by Région Pays de la Loire, FEDER and REACT EU via the CPER SAMURAI [3].

[1] https://www.grid5000.fr/w/Nantes:Hardware#ecotaxe

[2] The observed throughput depends on multiple parameters such as the workload, the number of streams, ... [3] https://www.imt-atlantique.fr/fr/recherche-innovation/collaborer/projet/samurai

-- Grid'5000 Team 14:10, 02 December 2025 (CET)

Grid'5000 sites

Current funding

INRIA |

CNRS |

UniversitiesIMT Atlantique |

Regional councilsAquitaine |