Grid5000:Home

|

Grid'5000 is a precursor infrastructure of SLICES-RI, Scientific Large Scale Infrastructure for Computing/Communication Experimental Studies.

|

|

Grid'5000 is a large-scale and flexible testbed for experiment-driven research in all areas of computer science, with a focus on parallel and distributed computing, including Cloud, HPC, Big Data and AI. Key features:

Older documents:

|

Random pick of publications

Five random publications that benefited from Grid'5000 (at least 2945 overall):

- Rosa Pagano, Sophie Cerf, Bogdan Robu, Quentin Guilloteau, Raphaël Bleuse, et al.. Making Control in High Performance Computing for Overload Avoidance Adaptive in Time and Job Size. CCTA 2024 - 8th IEEE Conference on Control Technology and Applications, Aug 2024, Newcastle Upon Tyne, United Kingdom. pp.1-8. hal-04669743 view on HAL pdf

- Hee-Soo Choi, Priyansh Trivedi, Mathieu Constant, Karën Fort, Bruno Guillaume. Au-delà de la performance des modèles : la prédiction de liens peut-elle enrichir des graphes lexico-sémantiques du français ?. Actes de JEP-TALN-RECITAL 2024. 31ème Conférence sur le Traitement Automatique des Langues Naturelles, volume 1 : articles longs et prises de position, Jul 2024, Toulouse, France. pp.36-49. hal-04623008 view on HAL pdf

- Georges da Costa, Amina Guermouche. Measurement methods sheet, WG6 Exa-Soft. Université de Toulouse; Université de bordeaux. 2025. hal-05272179 view on HAL pdf

- Pierre-François Gimenez, Jérôme Mengin. Learning Conditional Preference Networks: an Approach Based on the Minimum Description Length Principle. IJCAI 2024 - 33rd International Joint Conference on Artificial Intelligence, Aug 2024, Jeju, South Korea. pp.3395-3403, 10.24963/ijcai.2024/376. hal-04572196 view on HAL pdf

- Cédric Prigent, Melvin Chelli, Alexandru Costan, Loïc Cudennec, René Schubotz, et al.. Efficient Resource-Constrained Federated Learning Clustering with Local Data Compression on the Edge-to-Cloud Continuum. HiPC 2024 - 31st IEEE International Conference on High Performance Computing, Data, and Analytics, Dec 2024, Bengaluru (Bangalore), India. pp.1-11, 10.1109/HiPC62374.2024.00033. hal-04779813 view on HAL pdf

Latest news

![]() Cluster Sasquatch is now in default queue at Grenoble

Cluster Sasquatch is now in default queue at Grenoble

We are pleased to announce that the Sasquatch [1] cluster is now available in the default queue.

Sasquatch is a cluster composed of 2 HPE RL300 nodes, each featuring:

This cluster was funded by the PEPR IA.

[1] https://www.grid5000.fr/w/Grenoble:Hardware#sasquatch

[2] https://amperecomputing.com/briefs/ampere-altra-family-product-brief

Best regards, Grid'5000 Technical Team

-- Grid'5000 Team 10:15, 11 February 2026 (CEST)

![]() Cluster Spirou is now in default queue at Louvain

Cluster Spirou is now in default queue at Louvain

We are pleased to announce that the Spirou[1] cluster of the newly installed Louvain site is now available in the default queue.

Spirou is a cluster composed of 8 Lenovo ThinkSystem SR630 V2 nodes, each featuring:

Be aware that we noticed I/Os inconsistencies on this cluster.

We advise users to take this into account when performing experimentations on the cluster. See the following bug for more information: https://intranet.grid5000.fr/bugzilla/show_bug.cgi?id=16938

This cluster was funded by the Fonds de la Recherche Scientifique – FNRS (F.R.S.–FNRS), and its operation is supported by F.R.S.–FNRS and the Wallonia region (SPW).

[1] https://www.grid5000.fr/w/Louvain:Hardware#spirou

Best regards,

Grid'5000 Technical Team

-- Grid'5000 Team 10:24, 12 January 2026 (CEST)

![]() End of support for centOS7/8 and centOSStream8 environments

End of support for centOS7/8 and centOSStream8 environments

Support for the centOS7/8 and centOSStream8 kadeploy environments is stopped due to the end of upstream support and compatibility issues with recent hardware.

The last version of the centOS7 environments (version 2024071117), centOS8 environments (version 2024071119), centOSStream8 environments (version 2024070316) will remain available on /grid5000. Older versions can still be accessed in the archive directory (see /grid5000/README.unmaintained-envs for more information).

-- Grid'5000 Team 08:44, 4 December 2025 (CEST)

![]() Ecotaxe cluster is now in default queue at Nantes

Ecotaxe cluster is now in default queue at Nantes

We are pleased to announce that the ecotaxe cluster of Nantes is now available in the default queue.

As a reminder, ecotaxe is a cluster composed of 2 HPE ProLiant DL385 Gen10 Plus v2 servers[1].

Each node features:

To submit a job on this cluster, the following command may be used:

oarsub -t exotic -p ecotaxe

This cluster is co-funded by Région Pays de la Loire, FEDER and REACT EU via the CPER SAMURAI [3].

[1] https://www.grid5000.fr/w/Nantes:Hardware#ecotaxe

[2] The observed throughput depends on multiple parameters such as the workload, the number of streams, ... [3] https://www.imt-atlantique.fr/fr/recherche-innovation/collaborer/projet/samurai

-- Grid'5000 Team 14:10, 02 December 2025 (CET)

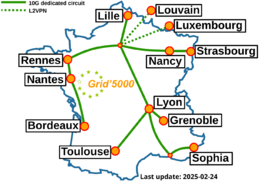

Grid'5000 sites

Current funding

INRIA |

CNRS |

UniversitiesIMT Atlantique |

Regional councilsAquitaine |