Grid5000:Home

|

Grid'5000 is a precursor infrastructure of SLICES-RI, Scientific Large Scale Infrastructure for Computing/Communication Experimental Studies.

|

|

Grid'5000 is a large-scale and flexible testbed for experiment-driven research in all areas of computer science, with a focus on parallel and distributed computing, including Cloud, HPC, Big Data and AI. Key features:

Older documents:

|

Random pick of publications

Five random publications that benefited from Grid'5000 (at least 2946 overall):

- Ndeye-Emilie Mbengue, Pierre Monnin, Miguel Couceiro, Fabien Gandon. Which Are the Low-Resource Languages of the Semantic Web?. ESWC 2026 - 23rd European Semantic Web Conference, May 2026, Dubrovnik, Croatia. hal-05607932 view on HAL pdf

- Ahmed Alharbi, Charles Bouillaguet. Lower bounds on the performance of hardware circuits computing the Möbius transform. 2026. hal-04896063v2 view on HAL pdf

- Louis Roussel. Integral equations modelling and deep learning. Machine Learning cs.LG. Université de Lille, 2025. English. NNT : 2025ULILB033. tel-05567799v2 view on HAL pdf

- Gautier Evennou, Vivien Chappelier, Ewa Kijak, Teddy Furon. SWIFT: Semantic Watermarking for Image Forgery Thwarting. WIFS 2024 - 16th IEEE International Workshop on Information Forensics and Security, IEEE, Dec 2024, Roma, Italy. pp.1-6. hal-04728070 view on HAL pdf

- Marc Jourdan, Clémence Réda. An Anytime Algorithm for Good Arm Identification. 2024. hal-04688141 view on HAL pdf

Latest news

![]() Cluster Chicoree is now in default queue at Lille

Cluster Chicoree is now in default queue at Lille

We are pleased to announce that the Chicoree [1] cluster is now available in the default queue.

Chicoree is a cluster composed of 1 Proliant DL380a Gen12 node, featuring:

This cluster was funded by the CPER CornelIA.

This cluster is tagged as "exotic", so the `-t exotic` option must be provided to oarsub to select chicoree.

[1] https://www.grid5000.fr/w/Lille:Hardware#chicoree

Best regards, Grid'5000 Technical Team

-- Grid'5000 Team 09:30, 04 June 2026 (CEST)

![]() Cluster Sasquatch is now in default queue at Grenoble

Cluster Sasquatch is now in default queue at Grenoble

We are pleased to announce that the Sasquatch [1] cluster is now available in the default queue.

Sasquatch is a cluster composed of 2 HPE RL300 nodes, each featuring:

This cluster was funded by the PEPR IA.

[1] https://www.grid5000.fr/w/Grenoble:Hardware#sasquatch

[2] https://amperecomputing.com/briefs/ampere-altra-family-product-brief

Best regards, Grid'5000 Technical Team

-- Grid'5000 Team 10:15, 11 February 2026 (CEST)

![]() Cluster Spirou is now in default queue at Louvain

Cluster Spirou is now in default queue at Louvain

We are pleased to announce that the Spirou[1] cluster of the newly installed Louvain site is now available in the default queue.

Spirou is a cluster composed of 8 Lenovo ThinkSystem SR630 V2 nodes, each featuring:

Be aware that we noticed I/Os inconsistencies on this cluster.

We advise users to take this into account when performing experimentations on the cluster. See the following bug for more information: https://intranet.grid5000.fr/bugzilla/show_bug.cgi?id=16938

This cluster was funded by the Fonds de la Recherche Scientifique – FNRS (F.R.S.–FNRS), and its operation is supported by F.R.S.–FNRS and the Wallonia region (SPW).

[1] https://www.grid5000.fr/w/Louvain:Hardware#spirou

Best regards,

Grid'5000 Technical Team

-- Grid'5000 Team 10:24, 12 January 2026 (CEST)

![]() End of support for centOS7/8 and centOSStream8 environments

End of support for centOS7/8 and centOSStream8 environments

Support for the centOS7/8 and centOSStream8 kadeploy environments is stopped due to the end of upstream support and compatibility issues with recent hardware.

The last version of the centOS7 environments (version 2024071117), centOS8 environments (version 2024071119), centOSStream8 environments (version 2024070316) will remain available on /grid5000. Older versions can still be accessed in the archive directory (see /grid5000/README.unmaintained-envs for more information).

-- Grid'5000 Team 08:44, 4 December 2025 (CEST)

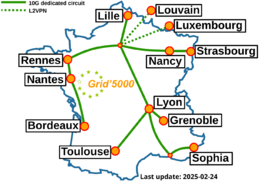

Grid'5000 sites

Current funding

INRIA |

CNRS |

UniversitiesIMT Atlantique |

Regional councilsAquitaine |