Grid5000:Home

|

Grid'5000 is a large-scale and flexible testbed for experiment-driven research in all areas of computer science, with a focus on parallel and distributed computing including Cloud, HPC and Big Data and AI. Key features:

Grid'5000 is merging with FIT to build the SILECS Infrastructure for Large-scale Experimental Computer Science. Read an Introduction to SILECS (April 2018)

Older documents:

|

Random pick of publications

Five random publications that benefited from Grid'5000 (at least 2779 overall):

- Pierre Epron, Gaël Guibon, Miguel Couceiro. ORPAILLEUR SyNaLP at CLEF 2024 Task 2: Good Old Cross Validation for Large Language Models Yields the Best Humorous Detection. Working Notes of the Conference and Labs of the Evaluation Forum (CLEF 2024), Sep 2024, Grenoble, France. pp.1841-1856. hal-04696012 view on HAL pdf

- Rémi Meunier, Thomas Carle, Thierry Monteil. Multi-core interference over-estimation reduction by static scheduling of multi-phase tasks. Real-Time Systems, 2024, pp.1--39. 10.1007/s11241-024-09427-3. hal-04689317 view on HAL pdf

- Lucas Leandro Nesi. Strategies for Distributing Task-Based Applications on Heterogeneous Platforms. Distributed, Parallel, and Cluster Computing cs.DC. Université Grenoble Alpes 2020-..; Universidade Federal do Rio Grande do Sul (Porto Alegre, Brésil), 2023. English. NNT : 2023GRALM048. tel-04468314 view on HAL pdf

- Guillaume Rosinosky, Donatien Schmitz, Etienne Rivière. StreamBed: Capacity Planning for Stream Processing. DEBS 2024 - 18th ACM International Conference on Distributed and Event-based Systems, Jun 2024, Lyon, France. pp.90-102, 10.1145/3629104.3666034. hal-04708354 view on HAL pdf

- Alaaeddine Chaoub. Deep learning representations for prognostics and health management. Computer Science cs. Université de Lorraine, 2024. English. NNT : 2024LORR0057. tel-04687618 view on HAL pdf

Latest news

Failed to load RSS feed from https://www.grid5000.fr/mediawiki/index.php?title=News&action=feed&feed=atom: Error parsing XML for RSS

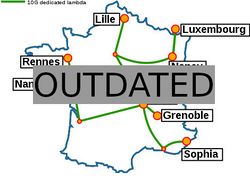

Grid'5000 sites

Current funding

As from June 2008, Inria is the main contributor to Grid'5000 funding.

INRIA |

CNRS |

UniversitiesUniversité Grenoble Alpes, Grenoble INP |

Regional councilsAquitaine |