Grid5000:Home

|

Grid'5000 is a large-scale and versatile testbed for experiment-driven research in all areas of computer science, with a focus on parallel and distributed computing including Cloud, HPC and Big Data. Key features:

Older documents:

|

Random pick of publications

Five random publications that benefited from Grid'5000 (at least 2780 overall):

- Kelvin Han, Claire Gardent. Multilingual Generation and Answering of Questions from Texts and Knowledge Graphs. The 2023 Conference on Empirical Methods in Natural Language Processing (EMNLP 2023 ), ACL, Dec 2023, Singapore, Singapore. pp.13740-13756, 10.18653/v1/2023.findings-emnlp.918. hal-04369793 view on HAL pdf

- Maxime Méloux, Christophe Cerisara. Novel-WD: Exploring acquisition of Novel World Knowledge in LLMs Using Prefix-Tuning. 2023. hal-04269919 view on HAL pdf

- Geo Johns Antony, Marie Delavergne, Adrien Lebre, Matthieu Rakotojaona Rainimangavelo. Thinking out of replication for geo-distributing applications: the sharding case. ICFEC 2024: 8th IEEE International Conference on Fog and Edge Computing, May 2024, Philadelphia, United States. pp.1-8, 10.1109/ICFEC61590.2024.00019. hal-04522961 view on HAL pdf

- Luca Di Stefano, Frédéric Lang. Compositional verification of priority systems using sharp bisimulation. Formal Methods in System Design, 2024, 62, pp.1-40. 10.1007/s10703-023-00422-1. hal-04103681 view on HAL pdf

- Matthieu Simonin, Anne-Cécile Orgerie. Méthodologies de calcul d'empreinte carbone sur une plateforme de calcul : exemple du site Grid'5000 de Rennes. JRES 2024 - Journées réseaux de l'enseignement et de la recherche, Renater, Dec 2024, Rennes, France. pp.1-14. hal-04893984 view on HAL pdf

Latest news

Failed to load RSS feed from https://www.grid5000.fr/mediawiki/index.php?title=News&action=feed&feed=atom: Error parsing XML for RSS

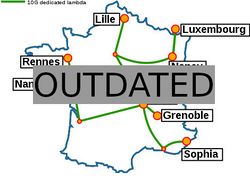

Grid'5000 sites

Current funding

As from June 2008, Inria is the main contributor to Grid'5000 funding.

INRIA |

CNRS |

UniversitiesUniversité Grenoble Alpes, Grenoble INP |

Regional councilsAquitaine |