Grid5000:Home: Difference between revisions

No edit summary |

No edit summary |

||

| (46 intermediate revisions by 6 users not shown) | |||

| Line 4: | Line 4: | ||

|bgcolor="#f5fff5" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | |bgcolor="#f5fff5" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | ||

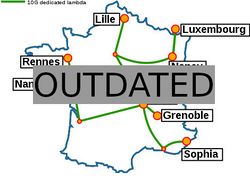

[[Image:renater5-g5k.jpg|thumbnail|250px|right|Grid'5000]] | [[Image:renater5-g5k.jpg|thumbnail|250px|right|Grid'5000]] | ||

'''Grid'5000 is a large-scale and flexible testbed for experiment-driven research in all areas of computer science, with a focus on parallel and distributed computing including Cloud, HPC and Big Data and IA.''' | |||

'''Grid'5000 is a large-scale and | |||

Key features: | Key features: | ||

* provides '''access to a large amount of resources''': | * provides '''access to a large amount of resources''': 12000 cores, 800 compute-nodes grouped in homogeneous clusters, and featuring various technologies: GPU, SSD, NVMe, 10G and 25G Ethernet, Infiniband, Omni-Path | ||

* '''highly reconfigurable and controllable''': researchers can experiment with a fully customized software stack thanks to bare-metal deployment features, and can isolate their experiment at the networking layer | * '''highly reconfigurable and controllable''': researchers can experiment with a fully customized software stack thanks to bare-metal deployment features, and can isolate their experiment at the networking layer | ||

* '''advanced monitoring and measurement features for traces collection of networking and power consumption''', providing a deep understanding of experiments | * '''advanced monitoring and measurement features for traces collection of networking and power consumption''', providing a deep understanding of experiments | ||

| Line 15: | Line 14: | ||

<br> | <br> | ||

Read more about our [[ | Read more about our [[Team|teams]], our [[Publications|publications]], and the [[Grid5000:UsagePolicy|usage policy]] of the testbed. Then [[Grid5000:Get_an_account|get an account]], and learn how to use the testbed with our [[Getting_Started|Getting Started tutorial]] and the rest of our [[:Category:Portal:User|Users portal]]. | ||

< | |||

<b>Grid'5000 is merging with [https://fit-equipex.fr FIT] to build the [http://www.silecs.net/ SILECS Infrastructure for Large-scale Experimental Computer Science]. Read [http://www.silecs.net/wp-content/uploads/2018/04/Desprez-SILECS.pdf an Introduction to SILECS] (April 2018)</b> | |||

<br> | <br> | ||

Recently published documents: | Recently published documents and presentations: | ||

* Slides from Frederic Desprez's keynote at IEEE CLUSTER 2014 | * [[Media:Grid5000.pdf|Presentation of Grid'5000]] (April 2019) | ||

* Report from the Grid'5000 Science Advisory Board | * [https://www.grid5000.fr/mediawiki/images/Grid5000_science-advisory-board_report_2018.pdf Report from the Grid'5000 Science Advisory Board (2018)] | ||

Older documents: | |||

* [https://www.grid5000.fr/slides/2014-09-24-Cluster2014-KeynoteFD-v2.pdf Slides from Frederic Desprez's keynote at IEEE CLUSTER 2014] | |||

* [https://www.grid5000.fr/ScientificCommittee/SAB%20report%20final%20short.pdf Report from the Grid'5000 Science Advisory Board (2014)] | |||

<br> | <br> | ||

| Line 27: | Line 31: | ||

|} | |} | ||

<br> | <br> | ||

{{#status:0|0|0|http://bugzilla.grid5000.fr/status/upcoming.json}} | {{#status:0|0|0|http://bugzilla.grid5000.fr/status/upcoming.json}} | ||

<br> | |||

== Random pick of publications == | |||

{{#publications:}} | |||

==Latest news== | |||

<rss max=4 item-max-length="2000">https://www.grid5000.fr/mediawiki/index.php?title=News&action=feed&feed=atom</rss> | |||

< | |||

=== | |||

---- | ---- | ||

[[ | [[News|Read more news]] | ||

=== Grid'5000 sites=== | === Grid'5000 sites=== | ||

{|width=" | {|width="100%" cellspacing="3" | ||

|- valign="top" | |- valign="top" | ||

|width="33%" bgcolor="#f5f5f5" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | |width="33%" bgcolor="#f5f5f5" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | ||

| Line 95: | Line 50: | ||

* [[Lille:Home|Lille]] | * [[Lille:Home|Lille]] | ||

* [[Luxembourg:Home|Luxembourg]] | * [[Luxembourg:Home|Luxembourg]] | ||

|width="33%" bgcolor="#f5f5f5" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | |||

* [[Lyon:Home|Lyon]] | * [[Lyon:Home|Lyon]] | ||

* [[Nancy:Home|Nancy]] | * [[Nancy:Home|Nancy]] | ||

* [[Nantes:Home|Nantes]] | * [[Nantes:Home|Nantes]] | ||

|width="33%" bgcolor="#f5f5f5" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | |||

* [[Rennes:Home|Rennes]] | * [[Rennes:Home|Rennes]] | ||

* [[Sophia:Home|Sophia-Antipolis]] | * [[Sophia:Home|Sophia-Antipolis]] | ||

* [[Toulouse:Home|Toulouse]] | * [[Toulouse:Home|Toulouse]] | ||

| Line 108: | Line 62: | ||

== Current funding == | == Current funding == | ||

As from June 2008, | As from June 2008, Inria is the main contributor to [[Grid5000:Funding|Grid'5000 funding]]. | ||

{|width="100%" cellspacing="3" | {|width="100%" cellspacing="3" | ||

|- | |- | ||

| Line 116: | Line 70: | ||

| width="50%" bgcolor="#f5f5f5" valign="top" align="center" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | | width="50%" bgcolor="#f5f5f5" valign="top" align="center" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | ||

===CNRS=== | ===CNRS=== | ||

[[Image:CNRS-filaire- | [[Image:CNRS-filaire-Quadri.png|125px]] | ||

|- | |- | ||

| width="50%" bgcolor="#f5f5f5" valign="top" align="center" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | | width="50%" bgcolor="#f5f5f5" valign="top" align="center" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | ||

===Universities=== | ===Universities=== | ||

Université Grenoble Alpes, Grenoble INP<br/> | |||

Université Rennes 1, Rennes<br/> | |||

Institut National Polytechnique de Toulouse / INSA / FERIA / Université Paul Sabatier, Toulouse<br/> | Institut National Polytechnique de Toulouse / INSA / FERIA / Université Paul Sabatier, Toulouse<br/> | ||

Université Bordeaux 1, Bordeaux<br/> | |||

Université Lille 1, Lille<br/> | |||

École Normale Supérieure, Lyon<br/> | |||

| width="50%" bgcolor="#f5f5f5" valign="top" align="center" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | | width="50%" bgcolor="#f5f5f5" valign="top" align="center" style="border:1px solid #cccccc;padding:1em;padding-top:0.5em;"| | ||

===Regional councils=== | ===Regional councils=== | ||

Aquitaine<br/> | Aquitaine<br/> | ||

Auvergne-Rhône-Alpes<br/> | |||

Bretagne<br/> | Bretagne<br/> | ||

Champagne-Ardenne<br/> | Champagne-Ardenne<br/> | ||

Provence Alpes Côte d'Azur<br/> | Provence Alpes Côte d'Azur<br/> | ||

Hauts de France<br/> | |||

Lorraine<br/> | Lorraine<br/> | ||

|} | |} | ||

Revision as of 08:23, 4 October 2019

|

Grid'5000 is a large-scale and flexible testbed for experiment-driven research in all areas of computer science, with a focus on parallel and distributed computing including Cloud, HPC and Big Data and IA. Key features:

Grid'5000 is merging with FIT to build the SILECS Infrastructure for Large-scale Experimental Computer Science. Read an Introduction to SILECS (April 2018)

Older documents:

|

Random pick of publications

Five random publications that benefited from Grid'5000 (at least 2780 overall):

- Chuyuan Li, Maxime Amblard, Chloé Braud. A Semi-supervised Dialogue Discourse Parsing Pipeline. Journées Scientifiques du GDR Lift (LIFT 2023), Nov 2023, Nancy, France. hal-04356416 view on HAL pdf

- Félix Gaschi, Xavier Fontaine, Parisa Rastin, Yannick Toussaint. Multilingual Clinical NER: Translation or Cross-lingual Transfer?. 5th Clinical Natural Language Processing Workshop, Jul 2023, Toronto, Canada. pp.289-311, 10.18653/v1/2023.clinicalnlp-1.34. hal-04193182 view on HAL pdf

- Anna Kravchenko. Fragment-based modelling of protein-RNA complexes for protein design. Bioinformatics q-bio.QM. Université de Lorraine, 2023. English. NNT : 2023LORR0370. tel-04504677 view on HAL pdf

- Thomas Firmin, Pierre Boulet, El-Ghazali Talbi. Asynchronous Multi-fidelity Hyperparameter Optimization Of Spiking Neural Networks. International Conference on Neuromorphic Systems (ICONS 2024), Jul 2024, Washington, United States. hal-04781629 view on HAL pdf

- Chih-Kai Huang, Guillaume Pierre. Aggregate Monitoring for Geo-Distributed Kubernetes Cluster Federations. IEEE Transactions on Cloud Computing, 2024, 12 (4), pp.1449-1462. 10.1109/TCC.2024.3482574. hal-04736577 view on HAL pdf

Latest news

Failed to load RSS feed from https://www.grid5000.fr/mediawiki/index.php?title=News&action=feed&feed=atom: Error parsing XML for RSS

Grid'5000 sites

Current funding

As from June 2008, Inria is the main contributor to Grid'5000 funding.

INRIA |

CNRS |

UniversitiesUniversité Grenoble Alpes, Grenoble INP |

Regional councilsAquitaine |