Getting Started

| Note | |

|---|---|

This page is actively maintained by the Grid'5000 team. If you encounter problems, please report them (see the Support page). Additionally, as it is a wiki page, you are free to make minor corrections yourself if needed. If you would like to suggest a more fundamental change, please contact the Grid'5000 team. | |

This tutorial will guide you through your first steps on Grid'5000. Before proceeding, make sure you have a Grid'5000 account (if not, follow this procedure), and an SSH client.

Getting support

The Support page describes how to get help during your Grid'5000 usage. There's also an FAQ and a cheat sheet with the most common commands.

Connecting for the first time

The primary way to move around Grid'5000 is using SSH. If you are not familiar with SSH, please consider the specific SSH and Grid'5000 tutorial. A reference page for SSH is also maintained with advanced configuration options heavy users will find useful.

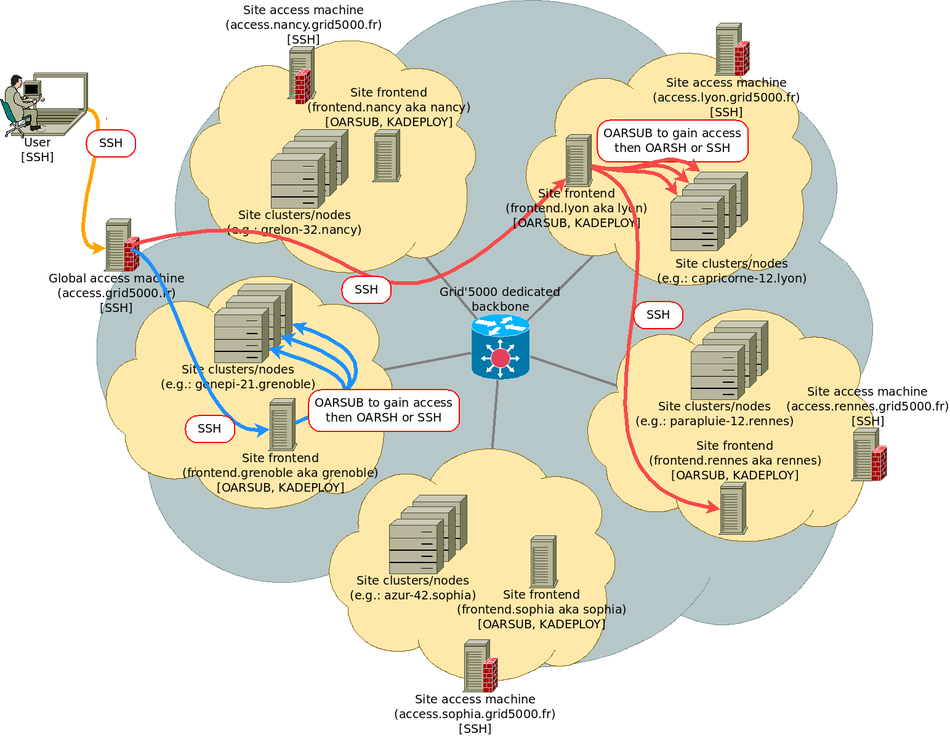

As described in the figure below, when using Grid'5000, you will typically:

- connect, using SSH, to an access machine

- connect from this access machine to a site frontend

- on this site frontend, reserve resources (nodes), and connect to those nodes

SSH connection through a web interface

If you want an out-of-the-box solution which does not require you to setup SSH, you can connect through a web interface. The interface is available at https://intranet.grid5000.fr/shell/SITE/. For example, to access nancy's site, use: https://intranet.grid5000.fr/shell/nancy/ To connect you will have to type in your credentials twice (first for the HTTP proxy, then for the SSH connection).

This solution is probably suitable to follow this tutorial, but is unlikely to be suitable for real Grid'5000 usage. So you should probably read the following sections about how to setup and use SSH at some point.

Connect to a Grid'5000 access machine

The access.grid5000.fr address points to two actual machines: access-south (currently hosted in Sophia-Antipolis) and access-north (currently hosted in Lille). Those machines provide SSH access to Grid'5000 from Internet.

You will get authenticated using the SSH public key you provided in the account creation form. Password authentication is disabled.

| Note | |

|---|---|

You can modify your SSH keys in the account management interface | |

If you prefer (for better bandwidth and latency), you might also be able to connect directly via your local Grid'5000 site. However, per-site access restrictions are applied, so using access.grid5000.fr is usually a simpler choice. See External_access for details about local access machines.

A VPN service is also available to connect directly to Grid'5000 hosts. See the VPN page for more information. If you only require HTTP/HTTPS access to a node, a reverse HTTP proxy is also available, see here.

Connecting to a Grid'5000 site

Grid'5000 is structured in sites (Grenoble, Rennes, Nancy, ...). Each site hosts one or more clusters (homogeneous sets of machines, usually bought at the same time).

To connect to a particular site, do the following (blue and red arrow labelled SSH in the figure above).

Home directories

You have a different home directory on each Grid'5000 site, so you will usually use Rsync or scp to move data around.

On access machines, you have direct access to each of those home directory, through NFS mounts (but using that feature to transfer very large volumes of data is inefficient). Typically, to copy a file to your home directory on the Nancy site, you can use:

Grid'5000 does NOT have a BACKUP service for Grid'5000's users home directories: it is your responsibility to save important data outside Grid'5000 (or at least to copy data to several Grid'5000 sites in order to increase redundancy).

Quotas are applied on home directories -- by default, you get 25 GB per Grid'5000 site. If your usage of Grid'5000 requires more disk space, it is possible to request quota extensions in the account management interface, or to use other storage solutions (see Storage).

Recommended tips and tricks for efficient use of Grid'5000

There are also several recommended tips and tricks for SSH and related tools, explained in the SSH page:

- Configure SSH aliases using the ProxyCommand option. Using this, you can avoid the two-steps connection (access machine, then frontend) and connect directly to frontends. Edit your ~/.ssh/config

Host g5k User USERNAME Hostname access.grid5000.fr ForwardAgent no Host *.g5k User USERNAME ProxyCommand ssh g5k -W "$(basename %h .g5k):%p" ForwardAgent no

- Using

rsyncinstead ofscp(better performance with multiple files) - Access your data from your laptop using SSHFS

- Edit files over SSH with your favorite text editor, with e.g.

vim scp://nancy.g5k/my_file.c

There are more in this talk from Grid'5000 School 2010, and this talk more focused on SSH.

Additionally, the Grid'5000 cheat sheet provides a nice summary of everything described in the tutorials.

Discovering, visualizing and reserving Grid'5000 resources

At this point, you should be connected to a site frontend, as indicated by your shell prompt (login@fsite:~$). This machine will be used to reserve and manipulate resources on this site, using the OAR software suite.

Discovering and visualizing resources

There are several ways to learn about the site's resources and their status:

- The site's MOTD (message of the day) lists all clusters and their features. Additionally, it gives the list of current or future downtimes due to maintenance, which is also available from https://www.grid5000.fr/status/.

- Site pages on the wiki (e.g. Nancy:Home) contain a detailed description of the site's hardware and network:

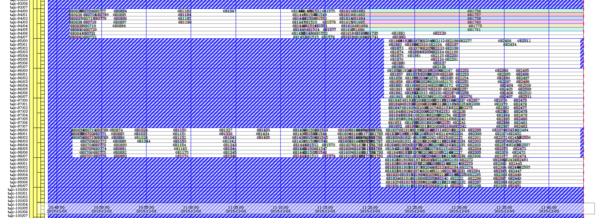

- The Status page links to the resource status on each site, with two different visualizations available:

- Monika, that provides the current status of nodes (see Nancy's current status)

- Gantt, that provides current and planned resources reservations (see Nancy's current status; example in the figure below).

- The Grid'5000 API (we'll look at that later on) provides a machine-readable description of Grid'5000 and machine-readable status information. This web UI can be used to discover resources.

Reserving resources with OAR: the basics

| Note | |

|---|---|

OAR is the resources and jobs management system (a.k.a batch manager) used in Grid'5000, just like in traditional HPC centers. However, settings and rules of OAR that are configured in Grid'5000 slightly differ from traditional batch manager setups in HPC centers, in order to match the requirements for an experimentation testbed. Please mind reading again Grid'5000 Usage Policy to understand the expected usage. | |

In Grid'5000 the smallest unit of resource managed by OAR is the core (cpu core), but by default a OAR job reserves a host (physical computer including all its cpu/cores). Hence, what OAR calls nodes are hosts (physical machines).

| Note | |

|---|---|

Most of this tutorial uses the site of Nancy (with the frontend: | |

To reserve one host (one node), in interactive mode, do:

To reserve three hosts (three nodes), in interactive mode, do:

or equivalently:

To reserve only one core in interactive mode, run:

As soon as a resource becomes available, you will be directly connected to the reserved resource with an interactive shell, as indicated by the shell prompt.

You can also simply launch your experiment along with your reservation:

Your program will be executed as soon as the requested resources are available (you will have to check for its termination using the oarstat command).

To reserve only one GPU (with the associated cores) in interactive mode, run:

(nodes with GPU are exclusively in the production queue in Nancy, on other sites, just don't use the -q production option to obtain the same result)

As soon as a resource becomes available, you will be directly connected to the reserved resource with an interactive shell, as indicated by the shell prompt.

To terminate your reservation and return to the frontend, simply exit this shell by typing exit or CTRL+d:

To avoid unanticipated termination of your jobs in case of errors (terminal closed by mistake, network disconnection), you can either use tools such as tmux or screen, or reserve and connect in 2 steps using the job id associated to your reservation. First, reserve a node, and ask it to sleep for a long time:

(10d stands for 10 days -- the command will be killed when the job expires anyway)

Then:

grisou-42:

|

hostname && ps -ef | grep sleep

env | grep OAR # discover environment variables set by OAR |

Of course, you will probably want to use more than one node on a given site, and you might want them for a different duration than one hour. The -l switch allows you to pass a comma-separated list of parameters specifying the needed resources for the job.

The walltime is the expected duration you envision to complete your work. Its format is [hour:min:sec|hour:min|hour] (walltime=5 => 5 hours, walltime=1:22 => 1 hour 22 minutes, walltime=0:03:30 => 3 minutes, 30 seconds).

By default, you can only connect to nodes in your reservation, and only using the oarsh connector to go from one node to the other. The connector supports the same options as the classical ssh command, so it can be used as a replacement for software expecting ssh.

oarsh is a wrapper around ssh that enables the tracking of user jobs inside compute nodes (for example, to enforce the correct sharing of resources when two different jobs share a compute node). If your application does not support choosing a different connector, it is possible to avoid using oarsh for ssh with the allow_classic_ssh job type, as in

Reservations in advance, job management, and selection of resources

- Reservations in advance

By default, oarsub will give you resources as soon as possible. You can also reserve resources at a specific time in the future, with the -r parameter:

- Job management

To list jobs currently submitted, use the oarstat command (use -u option to see only your jobs). A job can be deleted with:

| Note | |

|---|---|

Remember that all your resource reservations must comply with the Usage Policy. You can verify your reservations' compliance with the Policy with | |

- Selection of resources using OAR properties

The OAR nodes database contains a set of properties for each node, that can be used to request specific resources:

- Nodes from a given cluster

- Nodes with Infiniband FDR interfaces

- Nodes with power sensors and GPUs

- Since

-paccepts SQL, you could write

fnancy:

|

oarsub -p "wattmeter='YES' and host not in ('graphene-140.nancy.grid5000.fr', 'graphene-141.nancy.grid5000.fr')" -l nodes=5,walltime=2 -I |

The OAR properties available on each site are listed on the Monika pages linked from Status (example page for Nancy). The full list of OAR properties is available on this page.

- Extending the duration of a reservation

Provided that the resources are still available after your job, you can extend its duration (walltime) using e.g.:

This will request do add 1 hour and a half to job 12345.

The walltime change request status can be seen using either:

or in the event logs of the job using:

For more details, see the oarwalltime section of the Advanced OAR tutorial.

Monitoring your nodes

Grid'5000 provides two different services to monitor your nodes during your experiment: Kwapi and Ganglia.

Ganglia uses a service running on the nodes to collect information. If you point your browser to https://intranet.grid5000.fr/ganglia/, you will see all the metrics collected on Grid'5000. If you navigate first to the site, then to the node you have reserved, you will see the metrics collected for one node.

Electrical power consumption monitoring is available on some Grid'5000 clusters using Kwapi. It does not require any specific application running on the nodes. Go to the Kwapi page of Lyon, and visualize the power consumption of nodes you reserved (you might need to switch to another site using the Other sites menu).

The raw values and the historical data for both monitoring systems is also available through the Grid'5000 API. You can learn more about power monitoring in the Energy consumption monitoring tutorial.

Deploying your nodes to get root access and create your own experimental environment

Using oarsub gives you access to resources configured in their default (standard) environment, with a set of software selected by the Grid'5000 team. You can use such an environment to run Java or MPI programs, boot virtual machines with KVM, or access a collection of scientific-related software. However, you cannot deeply customize the software environment in a way or another.

Most Grid'5000 users use resources in a different, much more powerful way: they use Kadeploy to re-install the nodes with their software environment for the duration of their experiment, using Grid'5000 as a Hardware-as-a-Service Cloud. This enables them to use a different Debian version, another Linux distribution, or even Windows, and get root access to install the software stack they need.

Deploying nodes with Kadeploy

Reserve one node (the deploy job type is required to allow deployment with Kadeploy):

Start a deployment of the debian10-x64-base image on that node (this takes 5 to 10 minutes):

The -f parameter specifies a file containing the list of nodes to deploy. Alternatively, you can use -m to specify a node (such as -m gros-42.nancy.grid5000.fr). The -k parameter asks Kadeploy to copy your SSH key to the node's root account after deployment, so that you can connect without password. If you don't specify it, you will need to provide a password to connect. However, SSH is often configured to disallow root login using password. The root password for all Grid'5000-provided images is grid5000.

Reference images are named debian version-architecture-type. The debian version can be debian10 (Debian 10 "Buster", released in 07/2019) or debian9 (Debian 9 "stretch", released in 06/2017). The architecture is x64 (in the past, 32-bit images were also provided). The type can be:

min= a minimalistic image (standard Debian installation) with minimal Grid'5000-specific customization (the default configuration provided by Debian is used): addition of an SSH server, network interface firmware, etc (see changes).

base=min+ various Grid'5000-specific tuning for performance (TCP buffers for 10 GbE, etc.), and a handful of commonly-needed tools to make the image more user-friendly (see changes). Those could incur an experimental bias.

xen=base+ Xen hypervisor Dom0 + minimal DomU (see changes).

nfs=base+ support for mounting your NFS home and accessing other storage services (Ceph), and using your Grid'5000 user account on deployed nodes (LDAP) (see changes).

big=nfs+ packages for development, system tools, editors, shells (see changes).

And for the standard environment:

std=big+ integration with OAR. Currently, it is thedebian10-x64-stdenvironment which is used on the nodes if you or another user did not "kadeploy" another environment (see changes).

As a result, the environments you are the most likely to use are debian10-x64-min, debian10-x64-base, debian10-x64-xen, debian10-x64-nfs, debian10-x64-big, and their debian9 counterparts.

Environments are also provided and supported for some other distributions, only in the min variant:

- Ubuntu:

ubuntu1804-x64-minandubuntu2004-x64-min - Centos:

centos7-x64-minandcentos8-x64-min

Last, an environment for the upcoming Debian version (also known as Debian testing) is provided: debiantesting-x64-min (only min as well).

The list of all provided environments is available using kaenv3 -l. Note that environments are versionned, and old versions of reference environments are available in /grid5000/images/ on each frontend (as well as images that are no longer supported, such as Centos 6 images). This can be used to reproduce experiments even months or years later, still using the same software environment.

Customizing nodes and accessing the Internet

Now that your nodes are deployed, the next step is usually to copy data (usually using scp or rsync) and install software.

First, connect to the node as root:

You can access websites outside Grid'5000 : for example, to fetch the Linux kernel sources:

| Warning | |

|---|---|

Please note that, for legal reasons, your Internet activity from Grid'5000 is logged and monitored. | |

Let's install stress (a simple load generator) on the node from Debian's APT repositories:

Installing all the software needed for your experiment can be quite time-consuming. There are three approaches to avoid spending time at the beginning of each of your Grid'5000 sessions:

- Always deploy one of the reference environments, and automate the installation of your software environment after the image has been deployed. You can use a simple bash script, or more advanced tools for configuration management such as Ansible, Puppet or Chef.

- Register a new environment with your modifications, using the

tgz-g5ktool. More details are provided in the Advanced Kadeploy tutorial. - Use a tool to generate your environment image from a set of rules, such as Kameleon or Puppet. The Grid'5000 technical team uses those two tools to generates all Grid'5000 environments in a clean and reproducible process

All those approaches have different pros and cons. We recommend that you start by scripting software installation after deploying a reference environment, and that you move to other approaches when this proves too limited.

Monitoring deployed nodes

To limit experiment artefacts to the minimum, monitoring is not activated by default on reference environments. On a *-min image, you will first need to install the ganglia-monitor package :

You need to start the ganglia-monitor service to get meaningful results from the metrology API. You can do this using the following:

Controlling nodes (rebooting, accessing the serial console)

Grid'5000 provides you with out-of-band control of your nodes. You can access the node's serial console, trigger a reboot, or even power off the node. This is very useful in case your node loses network connectivity, or simply crashes.

Using another terminal, connect again to the site's frontend (for Nancy: fnancy), and then connect to your node's serial console, using:

As a reminder, the root password for all Grid'5000-provided images is grid5000. At the end of this tutorial, when you will need to exit that console, press '&' then '.' (more details about escape sequences are available on the Kaconsole page).

Using yet another terminal, connect again to the frontend. Now, shutdown the node, and watch it going down in the console:

After it has been shut down, check its status, and turn it on again:

Alternatively, you could have rebooted the node, using:

Checking nodes' changes over time

The Grid'5000 team puts on strong focus on ensuring that nodes meet their advertised capabilities. A detailed description of each node is stored in the Reference API, and the node is frequently checked against this description in order to detect hardware failures or misconfigurations.

To see the description of grisou-1.nancy.grid5000.fr, use:

Cleaning up after your reservation

At the end of your resources reservation, the infrastructure will automatically reboot the nodes to put them back in the default (standard) environment. There's no action needed on your side.

Going further

In this tutorial, you learned the basics of Grid'5000:

- The general structure of Grid'5000, and how to move between sites

- How to manage you data (one NFS server per site; remember: it is not backed up)

- How to find and reserve resources using OAR and the

oarsubcommand - How to get root access on nodes using Kadeploy and the

kadeploy3command

You should now be ready to use Grid'5000.

Additional tutorials

There are many more tutorials available on the Users Home. These tutorials cover more advanced aspects of Grid'5000, such as:

- using KaVLAN to isolate your experiments at the networking level

- Deploying your own, possibly customized, OpenStack Cloud inside Grid'5000 using ENOS

- accessing data from various monitoring systems (power consumption, network, system metrics)

- use Grid'5000 for HPC experiments, using MPI or accelerators (GPGPU or Xeon Phi)

- using the Grid'5000 REST API or a scripting library to script your experiment by automating resources selection, reservation and deployment

- performing experiments using large amounts of data with the different storage solutions available

- more advanced usage of OAR – including the use of OAR Grid to manage multi-sites jobs – and Kadeploy