FAQ

About this document

How to add/correct an entry to the FAQ?

Publications and Grid'5000

Is there an official acknowledgement ?

Yes there is: you agreed to it when accepting the usage policy. As the policy might have been updated since, please refer to the latest version. You should use it on all publications presenting results obtained (even partially) using Grid'5000.

How to mention Grid'5000 in HAL ?

HAL is an open archive you're invited to use. If you do so, the recommended way of mentioning Grid'5000 is to use the collaboration field of submission form, with the Grid'5000 keyword, capitalized as such.

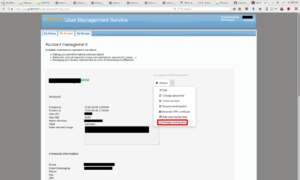

Account management

I forgot my password, how can I retrieve it ?

To retrieve your password, you can use this form, or ask your account manager to reset it.

My account expired, how can I extend it?

Use the account management interface (Manage account link in the sidebar).

Why doesn't my home directory contain the same files on every site?

Every site has its own file server, this is the user's responsibility to synchronize the personal data between his home directory on the different sites. You may use the rsync command to synchronize a remote site home directory (be careful this will erase any file that are not the same as on the local home directory):

rsync-n --delete -avz~frontend.site.grid5000.fr:~

NB : please remove the -n argument once you are sure you actually don't want to do a dry-run only...;)

How to get my home mounted on deployed nodes?

This is completely automatic if you deploy a *-nfs or *-big image (automount).

- You can connect using your own username and should land in your home;

- If connecting as root, once connected to the node, just change directory your home and it will be mounted:

cd/home/username

How to restore a wrongly deleted file?

No backup facility is provided by Grid'5000 platform. Please watch your fingers and do backup your data using external backup services.

What about disk quotas ?

See the section about the /home in the Storage page.

How do I unsubscribe from the mailing-list ?

Users' mailing-list subscription is tied to your Grid'5000 account. You can configure your subscriptions in your account settings:

- Login to https://api.grid5000.fr/ui/account

- Go to the "My account" tab, then click on the "Actions" button, then choose "Manage mailing lists"

Alternate method, by configuring Sympa to stop receiving any email from the list (while still being subscribed):

- If you haven't done it before, ask for a password on sympa.inria.fr from this form: https://sympa.inria.fr/sympa/firstpasswd/. Use the email address you used to register to Grid'5000.

- Connect to https://sympa.inria.fr using your email address you used to register to Grid'5000 and your sympa.inria.fr password.

- From the left panel, select users_grid5000. Then go to your subscriber options (Options d'abonné) and in the reception field (Mode de réception), select suspend (interrompre la réception des messages).

Network access to/from Grid'5000

How can I connect to Grid'5000 ?

This is documented at length in the Getting Started tutorial.

You should be able to access Grid'5000 from anywhere on the Internet, by connecting to access.grid5000.fr using SSH. You'll need SSH keys properly configured (please refer to the page dedicated to SSH if you don't understand these last words) as this machine will not allow you to log using a password.

Some sites have an access.site.grid5000.fr machine, which is only reachable from an IP address coming from local laboratory (replace site with the actual site name).

How to connect from different workstations with the same account?

You can associate several public SSH keys to your account. In order to do so, you have to:

- login

- go to User Portal > Manage Account,

- select the My account top tab,

- select the SSH keys left tab,

- then, manage your keys:

- add a new public SSH key ;

- remove an old one.

More information in the SSH page and the Public key authentication page.

How to directly connect by SSH to any machine within Grid'5000 from my workstation?

This tip consists of customizing SSH configuration file ~/.ssh/config (compatible with OpenSSH ssh client)

Host *.g5k UserloginProxyCommandsshlogin@access.grid5000.fr -W "$(basename %h .g5k):%p"

You can then connect to any machine using ssh machine.site.g5k

Please have a look at the SSH page for a deeper understanding and more information.

For users of powershell in Microsoft Windows which also comes with OpenSSH ssh client, mind adapting the configuration as the basename command may not be available.

| Note | |

|---|---|

Grid'5000 internal network uses private IP V4 addresses and are not directly reachable from outside of Grid'5000 | |

Is access to the Internet possible from nodes?

Full Internet access is allowed from Grid'5000 network to the Internet.

All IPv4 communication is NATed, while with IPv6 each node uses its own public IPv6 address.

What is the source address of outcoming traffic from Grid'5000 nodes to the Internet?

The IPv4 outcoming traffic from Grid'5000 nodes to the Internet is NATed. The public IPv4 addresses used as sources for the NATed packets are:

194.254.60.35 (nr-lil-536.grid5000.fr) 194.254.60.13 (nr-sop-535.grid5000.fr)

How can I connect to an HTTP or HTTPS service running on a node?

See the HTTP/HTTPs_access page.

See the HTTP/HTTPs_access page.

Could I access Grid'5000 nodes directly from the internet?

For other protocols than SSH and HTTP/HTTPs which provide lighter specific solutions, see the VPN and Reconfigurable_Firewall.

See the SSH page.

Software installation issues

What is the general philosophy ?

This is how things should work: a basic set of software is installed on the frontends and nodes' standard environment of each site. If you need some other software packages on nodes, you can create a Kadeploy image including them, and deploy it. You can also use at sudo-g5k. If you think those software should be installed by default, you can contact the support-staff.

About resources reservations (jobs)

How can I check whether my reservations are respecting the Grid'5000 Usage Policy?

You can use the script usagepolicycheck, present on all frontends. See if your current reservations are respecting the Policy with usagepolicycheck -t, use usagepolicycheck -h to see the other options.

To help respecting the usage policy, it is possible to use day and night OAR job types to fit batch jobs inside day vs. night / week-end time frames. More details are available in the Advanced OAR guide.

How can I reserve resources purchased by my team for a longer duration (e.g. 1 month)?

If your team purchased specific computing resources (you already have 'p1' access to them), and you need a reservation that is longer than 1 week, you must email the Abaca/SLICES-FR Technical Team <support-staff@lists.grid5000.fr>, with your team leader in Cc, and the following information:

Subject: Long job execution request * site: * cluster: * number of nodes: * date/time of the reservation: * duration of the reservation: * short explanation of the need for a long job:

How can I execute a campaign of tasks within previously reserved resources? (or smaller job in a bigger job)

This can be done either with OAR's container jobs, or with GNU Parallel:

- If all jobs, container and inner are from a same user, using GNU Parallel' should be preferred.

- Container job are mostly relevant for tutorials or teaching labs, where jobs are created by a set of different users. More information in Advanced_OAR#Container_jobs

About checkpoint/restart support of job

The Grid'5000 OAR service setup does not provide a seamless checkpoint/restart mechanism for jobs. While this is obviously a most wanted feature especially for long-running tasks that have to be split in order to fit in the platform usage policy, we think this is better to let the user take care of it. Indeed, while some techniques exist, such as CRIU, none seems satisfactory enough for a sustainable deployment in Grid'5000.

Note that OAR provides a mechanism to trigger an application to checkpoint itself, and to get a checkpointed job resubmitted.

Continuous Integration (CI) jobs

Running CI tasks on Grid'5000 is allowed, but special precautions must be taken:

- Inform the support staff that you plan to use Grid'5000 for CI

- Use (create) a dedicated user account (not your personal user account) that reflects your project's name, and make sure that the Professional status/Employee type is set to bot. This is important to allow differentiating between your own personal usage, and usage potentially generated by others through CI (however, remember that you remain responsible for the usage made by your project's bot account). It also allows the testbed operators to track usage generated by CI (for statistics).

- If you need to share data between your personal user account and your bot account, you can use a Group Storage.

- If you use GitHub, configure GitHub Actions to require approval before running workflows from external collaborators.

Orchestrating such tasks can be done using the Grid'5000 REST API, together with client libraries described on Software and Experiment_scripting_tutorial.

Several schemas are possible to run such tasks from GitLab (and manage credentials):

- Use an existing GitLab runner (such as GitLab's shared ones), store credentials (Grid5000 user account and password) in GitLab secrets, and create a job that will reserve resources as needed (typically using the Grid5000 API). See for example test_invivo_g5k* in EnOSLib's .gitlab-ci.yml

- Run your own GitLab runner on a Grid5000 frontend, as documented in the GitLab CI gallery. In that case, you do not need to store your Grid5000 user account and password in your home directory (because users are automatically identified when using the API from frontends). However you will need to store the gitlab runner token in your home directory, which might be a security issue (homes are not suitable for storing sensitive data).

- Use a Persistent Virtual Machine to host your GitLab runner service. All credentials (Grid5000's and runner's) are stored in the virtual machine.

Maintenance on Grid'5000

A maintenance slot is planned every Thursday on Grid'5000.

If a maintenance can impact the users jobs, we announce it on the mailing list users@lists.grid5000.fr .

When a maintenance is announced, you can follow its progress on the platform's operation schedule

How to use MPI in Grid5000?

See The MPI Tutorial.

See Storage.