Sophia:Network

See also: Hardware description for Sophia

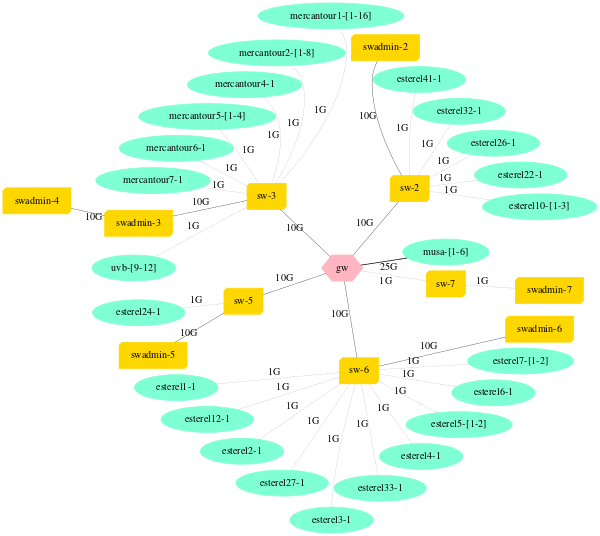

Overview of Ethernet network topology

Network devices models

- gw: Aruba 8325-48Y8C

- sw-1: Aruba 6300M

- sw-2: Aruba 6300M

- sw-3: Aruba 6300M

- sw-4: Dell PowerSwitch N1548

- sw-7: Dell PowerSwitch N4032

- sw-9: Aruba 6300M

- swadmin-2: Aruba 2930F

- swadmin-3: Aruba 2930F

- swadmin-4: Aruba 2930F

- swadmin-5: Aruba 2930F

- swadmin-6: Aruba 2930F

- swadmin-7: Aruba 2930F

- swadmin-8: Aruba 2930F

- swadmin-9: Aruba 2930F

More details (including address ranges) are available from the Grid5000:Network page.

High Performance networks

Infiniband 40G on uvb

uvb cluster nodes are all connected to 40G infiniband switches. Since these two clusters are shared with the Nef procution cluster at INRIA Sophia, we are using Infiniband partitions to isolate the nodes from nef when they are available on grid5000. The partition dedicated to grid5000 is 0x8100. The ipoib interfaces on nodes are therefore named ib0.8100 instead of ib0.

To use the native openib driver of openmpi, you must set: btl_openib_pkey = 0x8100

Nodes

uvb-9touvb-12have one QDR Infiniband card.- Card Model : Mellanox Technologies MT26428 [ConnectX IB QDR, PCIe 2.0 5GT/s].

- Driver :

mlx4_ib - OAR property : ib_rate=40

- IP over IB addressing :

uvb-[9..12]-ib0.sophia.grid5000.fr ( 172.18.132.[1..44] )

Interconnection

Infiniband network is physically isolated from Ethernet networks. Therefore, Ethernet network emulated over Infiniband is isolated as well. There isn't any interconnection, neither at the data link layer nor at the network layer.