Nancy:Network: Difference between revisions

Jump to navigation

Jump to search

No edit summary |

No edit summary |

||

| (16 intermediate revisions by 7 users not shown) | |||

| Line 2: | Line 2: | ||

{{Portal|Network}} | {{Portal|Network}} | ||

{{Portal|User}} | {{Portal|User}} | ||

'''See also:''' [[Nancy:Hardware|Hardware description for Nancy]] | |||

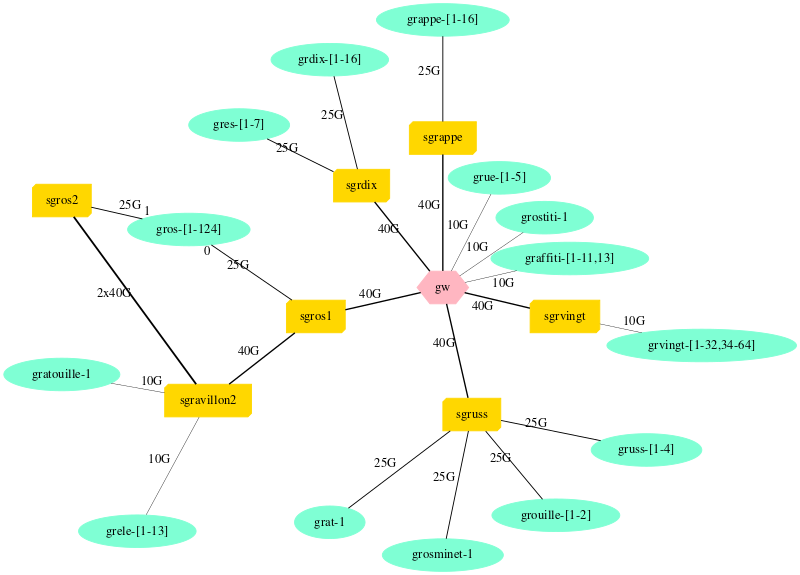

= Overview of Ethernet network topology = | = Overview of Ethernet network topology = | ||

[[File:NancyNetwork. | [[File:NancyNetwork.svg|800px]] | ||

{{Nancy:GeneratedNetwork}} | {{:Nancy:GeneratedNetwork}} | ||

= HPC Networks = | = HPC Networks = | ||

Several HPC Networks are available. | |||

== Omni-Path 100G on grele and grimani nodes == | == Omni-Path 100G on grele and grimani nodes == | ||

| Line 15: | Line 20: | ||

*<code class="host">grimani-1</code> to <code class="host">grimani-6</code> have one 100GB Omni-Path card. | *<code class="host">grimani-1</code> to <code class="host">grimani-6</code> have one 100GB Omni-Path card. | ||

* Card Model : Intel Omni-Path Host Fabric adaptateur series 100 1 Port PCIe x8 | * Card Model: Intel Omni-Path Host Fabric adaptateur series 100 1 Port PCIe x8 | ||

== | == Omni-Path 100G on grvingt nodes == | ||

There's another, separate Omni-Path network connecting the 64 grvingt nodes and some servers. The topology is a non blocking fat tree (1:1). | |||

Topology, generated from <code>opareports -o topology</code>: | |||

[[File:Topology-grvingt.png|400px]] | |||

More information about using Omni-Path with MPI is available from the [[Run_MPI_On_Grid%275000]] tutorial. | |||

'''NB: OPA (Omni-Path Architecture) is currently not supported on Debian 12 environment.''' | |||

=== Switch === | === Switch === | ||

| Line 39: | Line 42: | ||

=== Interconnection === | === Interconnection === | ||

Omnipath network is physically isolated from Ethernet networks. Therefore, Ethernet network emulated over Infiniband is isolated as well. There isn't any interconnexion, neither at the L2 or L3 layers. | |||

Latest revision as of 08:22, 19 June 2024

Network: Global | Grenoble | Lille | Louvain | Luxembourg | Lyon | Nancy | Nantes | Rennes | Sophia | Strasbourg | Toulouse

See also: Hardware description for Nancy

Overview of Ethernet network topology

Network devices models

- gw: Aruba 8325-48Y8C JL635A

- sgrappe: Dell S5224F-ON

- sgravillon2: Cisco Nexus 9508

- sgrdix: Aruba 8325-48Y8C

- sgrdixib: Mellanox QM8700

- sgrele-opf: Omni-Path

- sgros1: Dell Z9264F-ON

- sgros2: Dell Z9264F-ON

- sgruss: Dell S5224F-ON

- sgrvingt: Dell S4048

More details (including address ranges) are available from the Grid5000:Network page.

HPC Networks

Several HPC Networks are available.

Omni-Path 100G on grele and grimani nodes

grele-1togrele-14have one 100GB Omni-Path card.grimani-1togrimani-6have one 100GB Omni-Path card.

- Card Model: Intel Omni-Path Host Fabric adaptateur series 100 1 Port PCIe x8

Omni-Path 100G on grvingt nodes

There's another, separate Omni-Path network connecting the 64 grvingt nodes and some servers. The topology is a non blocking fat tree (1:1).

Topology, generated from opareports -o topology:

More information about using Omni-Path with MPI is available from the Run_MPI_On_Grid'5000 tutorial.

NB: OPA (Omni-Path Architecture) is currently not supported on Debian 12 environment.

Switch

- Infiniband Switch 4X DDR

- Model based on Infiniscale_III

- 1 commutation card Flextronics F-X43M204

- 12 line cards 4X 12 ports DDR Flextronics F-X43M203

Interconnection

Omnipath network is physically isolated from Ethernet networks. Therefore, Ethernet network emulated over Infiniband is isolated as well. There isn't any interconnexion, neither at the L2 or L3 layers.