Grid5000:Home

|

Grid'5000 is a large-scale and versatile testbed for experiment-driven research in all areas of computer science, with a focus on parallel and distributed computing including Cloud, HPC and Big Data. Key features:

|

Random pick of publications

Five random publications that benefited from Grid'5000 (at least 2779 overall):

- Mathieu Bacou. FaaSLoad : fine-grained performance and resource measurement for function-as-a-service. 2024. hal-04836444 view on HAL pdf

- Chaima Zoghlami, Rahim Kacimi, Riadh Dhaou. Leveraging RL for Efficient Collection of Perception Messages in Vehicular Networks. Global Information Infrastructure and Networking Symposium (GIIS 2024), Feb 2024, Dubai, United Arab Emirates. à paraître. hal-04408979 view on HAL pdf

- Govind KP, Guillaume Pierre, Romain Rouvoy. Studying the Energy Consumption of Stream Processing Engines in the Cloud. IC2E 2023 - 11th IEEE International Conference on Cloud Engineering, IEEE, Sep 2023, Boston (MA), United States. pp.1-9. hal-04164074 view on HAL pdf

- Hee-Soo Choi, Priyansh Trivedi, Mathieu Constant, Karën Fort, Bruno Guillaume. Au-delà de la performance des modèles : la prédiction de liens peut-elle enrichir des graphes lexico-sémantiques du français ?. Actes de JEP-TALN-RECITAL 2024. 31ème Conférence sur le Traitement Automatique des Langues Naturelles, volume 1 : articles longs et prises de position, Jul 2024, Toulouse, France. pp.36-49. hal-04623008 view on HAL pdf

- Gustavo Salazar-Gomez, Wenqian Liu, Manuel Alejandro Diaz-Zapata, David Sierra González, Christian Laugier. TLCFuse: Temporal Multi-Modality Fusion Towards Occlusion-Aware Semantic Segmentation. IV 2024 - 35th IEEE Intelligent Vehicles Symposium, Jun 2024, Jeju Island, South Korea. pp.2110-2116, 10.1109/IV55156.2024.10588460. hal-04717193 view on HAL pdf

Latest news

Failed to load RSS feed from https://www.grid5000.fr/mediawiki/index.php?title=News&action=feed&feed=atom: Error parsing XML for RSS

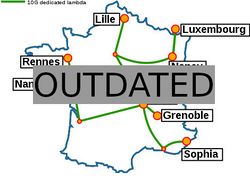

Grid'5000 sites

Current funding

As from June 2008, Inria is the main contributor to Grid'5000 funding.

INRIA |

CNRS |

UniversitiesUniversité Grenoble Alpes, Grenoble INP |

Regional councilsAquitaine |