Grid5000:Home: Difference between revisions

No edit summary |

No edit summary |

||

| Line 28: | Line 28: | ||

{{#status:0|0|0|http://bugzilla.grid5000.fr/status/upcoming.json}} | {{#status:0|0|0|http://bugzilla.grid5000.fr/status/upcoming.json}} | ||

<br> | <br> | ||

== Random pick of publications == | |||

{{#publications:}} | |||

==Latest news== | ==Latest news== | ||

Revision as of 19:14, 3 May 2018

|

Grid'5000 is a large-scale and versatile testbed for experiment-driven research in all areas of computer science, with a focus on parallel and distributed computing including Cloud, HPC and Big Data. Key features:

|

Random pick of publications

Five random publications that benefited from Grid'5000 (at least 2780 overall):

- Anna Kravchenko, Sjoerd Jacob De Vries, Malika Smaïl-Tabbone, Isaure Chauvot de Beauchêne. HIPPO: HIstogram-based Pseudo-POtential for scoring protein-ssRNA fragment-based docking poses. BMC Bioinformatics, 2024, 10.1186/s12859-024-05733-6. hal-04234486v2 view on HAL pdf

- Maxime Lanvin, Pierre-François Gimenez, Yufei Han, Frédéric Majorczyk, Ludovic Mé, et al.. Towards understanding alerts raised by unsupervised network intrusion detection systems. The 26th International Symposium on Research in Attacks, Intrusions and Defenses (RAID ), Oct 2023, Hong Kong China, France. pp.135-150, 10.1145/3607199.3607247. hal-04172470 view on HAL pdf

- Guillaume Helbecque, Ezhilmathi Krishnasamy, Nouredine Melab, Pascal Bouvry. GPU-Accelerated Tree-Search in Chapel versus CUDA and HIP. 14th IEEE Workshop Parallel / Distributed Combinatorics and Optimization (PDCO 2024), May 2024, San Francisco, United States. 10.1109/IPDPSW63119.2024.00156. hal-04551856 view on HAL pdf

- William Soto, Yannick Parmentier, Claire Gardent. Phylogeny-Inspired Soft Prompts For Data-to-Text Generation in Low-Resource Languages. IJCNLP-AACL 2023: The 13th International Joint Conference on Natural Language Processing and the 3rd Conference of the Asia-Pacific Chapter of the Association for Computational Linguistics, ACL, Nov 2023, Bali, Indonesia. hal-04199557v2 view on HAL pdf

- Chuyuan Li, Maxime Amblard, Chloé Braud. A Semi-supervised Dialogue Discourse Parsing Pipeline. Journées Scientifiques du GDR Lift (LIFT 2023), Nov 2023, Nancy, France. hal-04356416 view on HAL pdf

Latest news

Failed to load RSS feed from https://www.grid5000.fr/mediawiki/index.php?title=News&action=feed&feed=atom: Error parsing XML for RSS

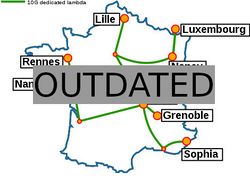

Grid'5000 sites

Current funding

As from June 2008, Inria is the main contributor to Grid'5000 funding.

INRIA |

CNRS |

UniversitiesUniversité Grenoble Alpes, Grenoble INP |

Regional councilsAquitaine |