Grid5000:Home

|

Grid'5000 is a precursor infrastructure of SLICES-RI, Scientific Large Scale Infrastructure for Computing/Communication Experimental Studies.

|

|

Grid'5000 is a large-scale and flexible testbed for experiment-driven research in all areas of computer science, with a focus on parallel and distributed computing, including Cloud, HPC, Big Data and AI. Key features:

Older documents:

|

Random pick of publications

Five random publications that benefited from Grid'5000 (at least 2777 overall):

- Duy Van Ngo, Yannick Parmentier. Towards Sentence-level Text Readability Assessment for French. Second Workshop on Text Simplification, Accessibility and Readability (TSAR@RANLP2023), Sep 2023, Varna, Bulgaria. hal-04192063 view on HAL pdf

- Miguel Felipe Silva Vasconcelos. Strategies for operating and sizing low-carbon cloud data centers. Other cs.OH. Université Grenoble Alpes 2020-..; Universidade de São Paulo (Brésil), 2023. English. NNT : 2023GRALM093. tel-04678116 view on HAL pdf

- Ilhem Fajjari, Wassim Aroui, Joaquim Soares, Vania Marangozova. Use Cases Requirements. UGA (Université Grenoble Alpes). 2024. hal-04450028 view on HAL pdf

- Melvin Chelli, Cédric Prigent, René Schubotz, Alexandru Costan, Gabriel Antoniu, et al.. FedGuard: Selective Parameter Aggregation for Poisoning Attack Mitigation in Federated Learning. Cluster 2023 - IEEE International Conference on Cluster Computing, Oct 2023, Santa Fe, New Mexico, United States. pp.1-10, 10.1109/CLUSTER52292.2023.00014. hal-04208787 view on HAL pdf

- Quentin Guilloteau, Florina M Ciorba, Millian Poquet, Dorian Goepp, Olivier Richard. Longevity of Artifacts in Leading Parallel and Distributed Systems Conferences: a Review of the State of the Practice in 2023. REP 2024 - ACM Conference on Reproducibility and Replicability, ACM, Jun 2024, Rennes, France. pp.1-14, 10.1145/3641525.3663631. hal-04562691 view on HAL pdf

Latest news

![]() Cluster "vianden" is now in the default queue in Luxembourg

Cluster "vianden" is now in the default queue in Luxembourg

We are pleased to announce that the vianden[1] cluster of Luxembourg is now available in the default queue.

Vianden is a cluster of a single node with 8 MI300X AMD GPUs.

The node features:

The AMD MI300X GPUs are not supported by Grid'5000 default system (Debian 11). However, one can easily unlock full GPU functionality by deploying the ubuntu2404-rocm environment:

fluxembourg$ oarsub -t exotic -t deploy -p vianden -I

fluxembourg$ kadeploy3 -m vianden-1 ubuntu2404-rocm

More information in the Exotic page.

This cluster was funded by the University of Luxembourg.

[1] https://www.grid5000.fr/w/Luxembourg:Hardware#vianden

-- Grid'5000 Team 11:30, 27 June 2025 (CEST)

![]() Cluster "hydra" is now in the default queue in Lyon

Cluster "hydra" is now in the default queue in Lyon

We are pleased to announce that the hydra[1] cluster of Lyon is now available in the default queue.

As a reminder, Hydra is a cluster composed of 4 NVIDIA Grace-Hopper servers[2].

Each node features:

Due to its bleeding-edge hardware, the usual Grid'5000 environments are not supported by default for this cluster.

(Hydra requires system environments featuring a Linux kernel >= 6.6). The default system on the hydra nodes is based on Debian 11, but **does not provide functional GPUs**. However, users may deploy the ubuntugh2404-arm64-big environment, which is similar to the official Nvidia image provided for this machine and provides GPU support.

To submit a job on this cluster, the following command may be used:

oarsub -t exotic -p hydra

This cluster is funded by INRIA and by Laboratoire de l'Informatique du Parallélisme with ENS Lyon support.

[1] Hydra is the largest of the modern constellations according to Wikipedia: https://en.wikipedia.org/wiki/Hydra_(constellation)

[2] https://developer.nvidia.com/blog/nvidia-grace-hopper-superchip-architecture-in-depth/

-- Grid'5000 Team 16:42, 12 June 2025 (CEST)

![]() Cluster "estats" (Jetson nodes in Toulouse) is now kavlan capable

Cluster "estats" (Jetson nodes in Toulouse) is now kavlan capable

The network topology of the estats Jetson nodes can now be configured, just like for other clusters.

More info in the Network reconfiguration tutorial.

-- Grid'5000 Team 18:25, 21 May 2025 (CEST)

![]() Cluster "chirop" is now in the default queue of Lille with energy monitoring.

Cluster "chirop" is now in the default queue of Lille with energy monitoring.

Dear users,

We are pleased to announce that the Chirop[1] cluster of Lille is now available in the default queue.

This cluster consists of 5 HPE DL360 Gen10+ nodes with:

Energy monitoring[2] is also available for this cluster[3], provided by newly installed Wattmetres (similar to those already available at Lyon).

This cluster was funded by CPER CornelIA.

[1] https://www.grid5000.fr/w/Lille:Hardware#chirop

[2] https://www.grid5000.fr/w/Energy_consumption_monitoring_tutorial [3] https://www.grid5000.fr/w/Monitoring_Using_Kwollect#Metrics_available_in_Grid.275000

-- Grid'5000 Team 16:25, 05 May 2025 (CEST)

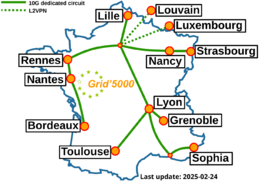

Grid'5000 sites

Current funding

INRIA |

CNRS |

UniversitiesIMT Atlantique |

Regional councilsAquitaine |